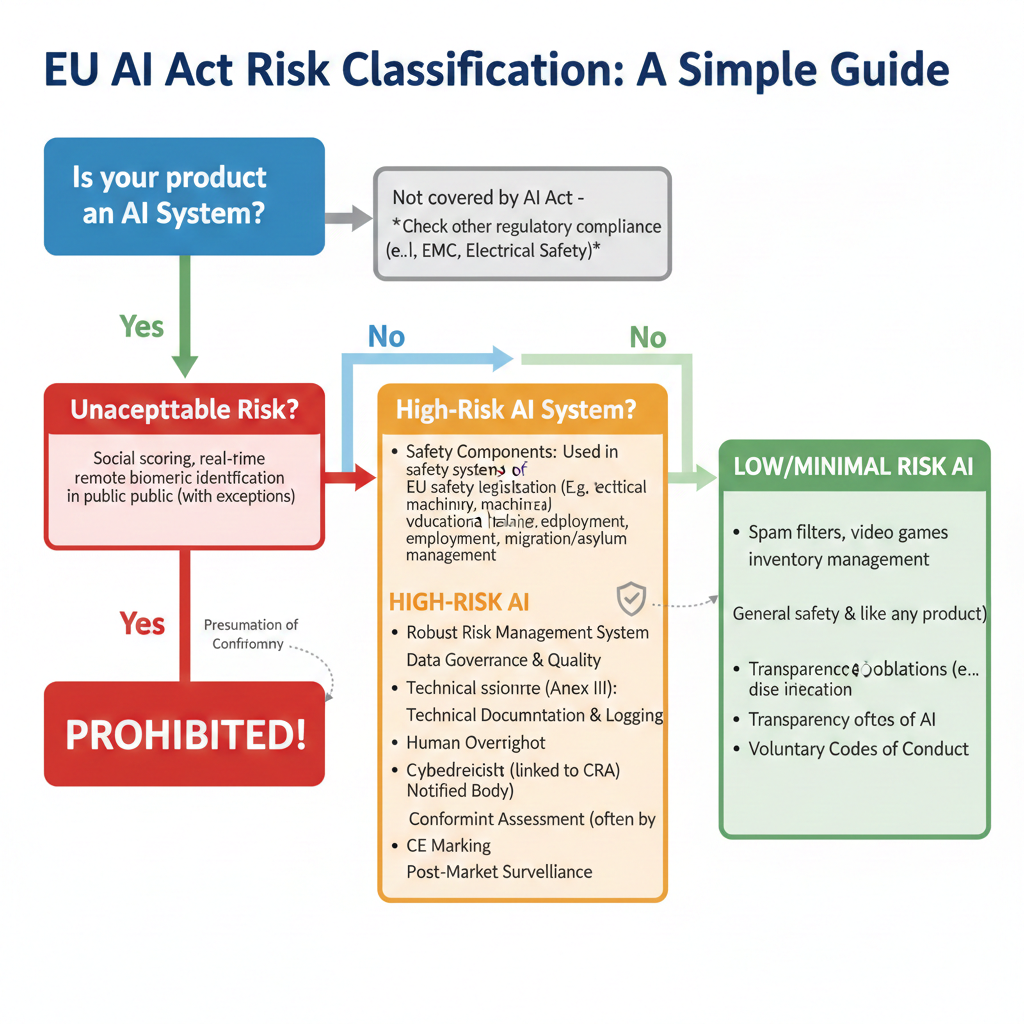

Navigating the Presumption of Conformity

The European Union has ushered in a new era of product certification. A physical product’s traditional electrical safety is now intrinsically linked to its digital resilience. This complexity is compounded for manufacturers developing high-risk Artificial Intelligence (AI) systems. You suddenly find yourself debating: EU AI Act vs Cyber Resilience Act, what is the difference? Both regulations require extensive technical documentation. They demand risk management systems and mandate post-market surveillance. But you should not view this as double the work. The EU legislative bodies recognized the potential for duplication. They created a specific mechanism to streamline the process: the Presumption of Conformity. Understanding how the EU AI Act vs Cyber Resilience Act overlap works is critical to your compliance strategy.

The regulatory landscape is shifting from static hardware checks to dynamic software oversight. In the past, a technician could verify the safety of a device once and feel relatively confident for its lifecycle. Now, the introduction of AI and constant connectivity means that a “safe” product on Monday could become a “hazardous” one on Tuesday due to a new software vulnerability. To address this, the EU has moved toward a “Horizontal” legislative approach. This means rules like the CRA apply to almost everything with a chip, while “Vertical” rules like the AI Act apply only to specific technologies.

The Overlap: Where AI Safety Meets Digital Resilience

The Overlap: Where AI Safety Meets Digital Resilience

At first glance, the two Acts appear to govern different domains. The EU AI Act focuses on the quality and transparency of the AI model itself. It aims to protect fundamental rights from algorithmic risk. Conversely, the CRA focuses on the ‘product with digital elements’. It establishes a horizontal baseline for cybersecurity across the entire product lifecycle.

The link becomes explicit in Article 15 of the AI Act. This article mandates that high-risk AI systems must achieve an appropriate level of robustness and cybersecurity. This includes:

- Resilience against manipulation, malfunction, and unauthorized access.

- Protection against adversarial attacks, such as data poisoning or model manipulation.

Any high-risk AI system that is also a connected device (e.g., an AI-driven medical device) is a ‘product with digital elements’. Therefore, it is covered by both regulations. This creates a risk of needing two separate certification paths for the same cybersecurity requirements.

The Synergy: Leveraging the Presumption of Conformity

Fortunately, the EU has built a bridge between these two frameworks. This avoids redundant testing and auditing. This bridge is known as the Presumption of Conformity. Simply put, the regulation offers a deal. If a high-risk AI system successfully meets the core cybersecurity requirements of the Cyber Resilience Act, it is presumed to be compliant with the cybersecurity requirements of the AI Act. This mechanism is a lifesaver for engineering teams.

Technician’s Note: This is the most important strategic takeaway for the next five years. Do not run a separate cybersecurity audit for AI Act compliance and another for CRA certification. Focus on building a single, robust cybersecurity framework that satisfies the CRA.

By aligning your testing phases, you ensure that the evidence gathered for one regulation serves the other. This prevents the “audit fatigue” that often plagues large-scale manufacturing projects. It also ensures that your technical file remains a single source of truth for all digital and algorithmic safety claims.

Technician’s Note: This is the most important strategic takeaway for the next five years. Do not run a separate cybersecurity audit for AI Act compliance and another for CRA certification. Focus on building a single, robust cybersecurity framework that satisfies the CRA.

Technical Documentation and Conformity Assessment

The Presumption of Conformity offers a streamlined route. However, it does not apply universally. The documentation burden remains substantial. You must carefully assess your product against both regulations:

- High-Risk AI Classification: Does the AI system fall under Annex I or Annex III of the AI Act? If yes, extensive AI-specific technical documentation is required.

- CRA Criticality: Is the underlying digital product classified as ‘critical’ or ‘important’ under the CRA? If so, the conformity assessment procedure will be stricter. It may require mandatory third-party assessment by a Notified Body.

A key challenge is the continuous nature of compliance. The CRA mandates a full product lifecycle approach. This includes a published support period and continuous vulnerability patching. Any failure to perform security updates under the CRA can directly jeopardize the product’s ongoing compliance with the AI Act. The two are inextricably linked.

Integrating Your Risk Management

Your risk management system (RMS) for the AI Act must integrate your vulnerability management process required by the CRA. They cannot exist as separate, siloed documents.

This is where many manufacturers fail. They treat the risk file as a one-time checklist. To succeed, you must shift your mindset and treat risk analysis as a strategy rather than a formality. Your risk file should be a living document that evolves with every software patch and model update.

I recommend using standards like ISO/IEC 42001 (AI Management System) to build this unified structure. This ensures both the robustness of the model and the security of the underlying platform are documented together. Regulatory teams must now cooperate more closely with software security architects than ever before. This is the new reality of CE marking in the digital era.

Comparison: AI Act vs. Cyber Resilience Act

| Feature | EU AI Act | Cyber Resilience Act (CRA) |

| Primary Goal | Human safety & ethical AI | Hardware & software cybersecurity |

| Scope | Specific AI use-cases (High-Risk) | All products with digital elements |

| Key Metric | Model transparency & accuracy | Vulnerability handling & patching |

| Lifecycle | Focus on training & deployment | Focus on the entire support period |

| Assessment | Self-assessment or Notified Body | Dependent on “Class” of product |

The interplay between these two is the cornerstone of modern European market access. The AI Act examines the “brain” of the device. Meanwhile, the CRA assesses the “skin” and “nerves” that connect it to the world. If either one is weak, the entire system is considered non-compliant under the New Legislative Framework.

The Digital Product Passport: A New Tool for Compliance

The integration of these regulations is not just a legal exercise. It is becoming a physical part of the product’s identity through the emergence of the Digital Product Passport (DPP). Under the new Ecodesign for Sustainable Products Regulation (ESPR) and the Cyber Resilience Act, products will soon carry a machine-readable data carrier. This could be a QR code. It will provide instant access to safety and sustainability information. For a technician, this means that the technical documentation we used to hide in dusty binders will now be accessible to market surveillance authorities and even consumers with a simple scan. This transparency stems from the “Horizontal” approach. It ensures that whether you are checking for AI bias or a software vulnerability, the data is structured. It is also synchronized and always up to date.

Strategic Implementation: Beyond the Checklist

To truly navigate the presumption of conformity, manufacturers must make a transition. They should move from a “siloed” documentation style to an “integrated” lifecycle management approach. This means your Software Bill of Materials (SBOM) should not just list components for the CRA; it should also serve as the foundation for the AI Act’s transparency requirements by identifying the libraries and data sets used in your model. By merging these streams, you create a “compliance by design” culture. Every code commit is automatically checked against both cybersecurity and AI safety benchmarks. This foresight transforms regulatory hurdles into a competitive advantage, allowing you to ship faster and with greater confidence in the complex European single market.

Regulatory Developments to Watch

- The Harmonized Standards for CRA: Keeping an eye on CEN/CENELEC as they draft the specific standards that grant Presumption of Conformity.

- AI Office Guidelines: Periodic updates from the newly formed EU AI Office on how to document “General Purpose AI.”

- The NIS2 Directive Interplay: How the CRA connects to the broader network security rules for critical infrastructure.

- UK and US AI Alignments: Tracking whether the “Presumption of Conformity” logic will be accepted in non-EU markets.

Final Considerations

Navigating the Presumption of Conformity is not about finding a loophole; it is about finding efficiency. The EU wants to encourage innovation, not stifle it with endless paperwork. By understanding how the CRA provides the foundation for AI safety, you can design products that are secure by design and compliant by default. This proactive approach will save your team months of rework and thousands of euros in redundant testing fees.

The technician of the future must be as comfortable with a CVE (Common Vulnerabilities and Exposures) report as they are with a multimeter. We are no longer just checking wires; we are checking code. Welcome to the new frontier of regulatory compliance.