When we discuss risk analysis or compliance documentation, the expectation is that these are objective, technical, and factual processes. We envision engineers sitting around a table with calibrated instruments and regulatory handbooks, producing a cold, mathematical evaluation of a product’s safety. But let’s be honest: they’re often not as sterile as we pretend. Behind the charts, checklists, and fine wording lies a very human problem: cognitive and organizational bias. Whether you are filling out a risk analysis for a CE declaration, working on a compliance checklist for EMC, or performing a Failure Mode and Effects Analysis (FMEA) during product design, personal and team biases can shape the document into something that doesn’t reflect reality.

This divergence from reality isn’t necessarily born of malice or a desire to deceive. Most professionals in the regulatory field are deeply committed to safety. However, they are susceptible to the psychological shortcuts our brains take to process complex information. We often believe a risk is lower or less likely than it really is because of a “gut feeling” shaped by office politics, tight deadlines, or the fear of opening a Pandora’s box of redesigns. In this article, we will explore how these biases manifest in the certification process. More importantly, we will discuss how we can build more robust, honest frameworks to counter them.

Bias in risk assessment is a studied and complex topic, in this article we will try to explain some main points.

Rating Risks Based on What We Hope

Many compliance documents are filled in by people who are simply too close to the product. Designers, engineers, or project managers may unconsciously downplay risks they believe are already under control through their own clever engineering. This is often driven by “availability bias,” where if a specific failure hasn’t happened in the recent past, we assume it won’t happen in the future, even if the underlying design or environment has changed significantly.

But true compliance isn’t about documenting what has happened; it’s about anticipating what could happen under reasonable (and sometimes unreasonable) conditions. To navigate this, technical teams must move away from anecdotal evidence and toward rigorous, data-driven identification phases.

Technical Insight: Risk identification is the foundation of the entire process. According to recent literature reviews on risk management in product development, effective identification requires a mix of “top-down” (system-level) and “bottom-up” (component-level) approaches to ensure no blind spots are created by over-familiarity with the design.

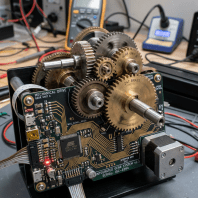

For example, a product team designing a power supply might write in the FMEA that the risk of fire from thermal runaway is “low” because a similar thermal pad “has always worked well.” They skip over proper thermal stress testing in varied ambient temperatures. When the product hits the market and encounters real-world heat, it fails. The risk wasn’t low; it was just incorrectly assessed due to a lack of detached, objective scrutiny.

Team Dynamics Kill Honesty

Risk analysis should be a moment of deep reflection where all team members put real issues on the table without fear of retribution. However, in many corporate environments, being the person who says, “Hey, this could fail,” is socially and professionally uncomfortable. When deadlines are tight and management is pushing for a “clean” certification path, “conformity bias” and “status quo bias” take over.

This social pressure leads to documentation that becomes a mere formality rather than a tool for effective risk prevention. Teams often soften their messaging or stay quiet to keep the project moving. Ironically, this behavior usually backfires. Problems swept under the rug during the design phase almost always resurface during lab testing or, worse, after the product is in the hands of the customer.

Addressing these risks early, when mitigation is still possible and relatively inexpensive, is far less painful than dealing with a global product recall. Conversely, some teams suffer from “analysis paralysis,” fixating on highly improbable, far-fetched risks to avoid making difficult decisions. This is often a mask for a deeper fear of taking responsibility for the next phase of development. When risk avoidance is disguised as extreme caution, momentum is lost, and the project stalls not because of danger, but because of a lack of clear, biased-free leadership.

The Science of Risk Assessment and Mitigation

In the academic study of product development, risk management is generally divided into three critical stages: identification, assessment, and mitigation. To move beyond bias, we must treat each stage as a distinct discipline. Assessment involves not just guessing at numbers, but using qualitative and quantitative methods to determine the magnitude of a risk.

Effective mitigation then requires a prioritized list of actions. These actions must actually reduce the risk to an acceptable level. This approach is better than just documenting its existence. Without a structured approach to these three pillars, the regulatory process remains a game of chance.

- Identification: Using checklists, brainstorming, and historical data to find “known unknowns.”

- Assessment: Applying tools like FMEA or Fault Tree Analysis to quantify the impact and probability.

- Mitigation: Implementing design changes, protective measures, or user instructions to lower the residual risk.

By treating these as separate, rigorous steps, we create “checkpoints” where bias can be caught. If the identification phase is thorough, it becomes much harder for a biased assessment to simply ignore a documented hazard during the later stages of the certification journey.

Optimism Bias, confusing “Unlikely” With “Impossible”

One of the most common sins in electrical safety documentation is assigning a risk as “remote” or “improbable” simply because the failure mechanism is complex. This happens even when the mechanism is rare. This is “optimism bias”, wishful thinking disguised as engineering judgment. It is the dangerous habit of saying, “This could happen, but it probably won’t, so let’s just mark it as ‘Low’ and move on.”

To counter this, we must change the questions we ask during the assessment phase. Instead of asking if we think it will happen, we should ask:

- Is the failure mode physically possible?

- What are the absolute worst-case consequences if it does happen?

- Are we willing to bet the company’s reputation on the assumption that it won’t?

By ranking probability in the most measurable way possible, using Mean Time Between Failures (MTBF) data or stress test results, we take control of the ranking. A measurable, evidence-based ranking triggers the right decision-making processes, ensuring that resources are allocated to the risks that actually matter.ney.

The “It Passed the Test” Mentality

There is a pervasive belief that passing a lab test is the “finish line” for risk management. However, passing a test once in a controlled environment is not a guarantee of long-term compliance. This is “anchoring bias,” where a team fixates on a single positive result (the lab report) and ignores the broader variability of mass production and real-world use.

Tests are snapshots in time. They don’t always account for component aging, user abuse, environmental fluctuations, or shifts in component tolerances. If a team doesn’t truly “own” the testing process, they are flying blind. This means they don’t understand why the product passed. They also don’t know how close it came to failing. For instance, Hipot test and Leakage Current, are done as part of a checklist but sometimes not fully understood.

Expert Tip: Always review the “margin of pass.” If your product passed an EMC emissions test by only 0.5 dB, you haven’t really solved the problem; you’ve just gotten lucky with that specific prototype.

Results must always be discussed and validated. A “Pass” in the lab should be the starting point for a discussion on how to maintain that performance across thousands of units in the field. If we rely solely on the certificate without understanding the physics behind it, we are setting ourselves up for future liability.

Ticking Boxes Doesn’t Mean Compliance

Let’s be blunt: many compliance documents are written with one goal in mind, “get the signature.” There’s pressure to close the file, not to open discussions. So risks are watered down, control measures are made generic, and mitigation becomes just another checkbox.

That’s procedural bias, and it turns a tool meant to help into a tool that hides problems.

In some companies, risk analysis is a recycled template, copied from a previous project with names changed. That’s not compliance. That’s a liability waiting to happen.

Strategic Steps to Eliminate Bias

If we want our compliance efforts to hold value, we must consciously restructure our internal cultures. This requires more than just better templates; it requires a shift in how we value honesty over speed.

- Create a Culture of Radical Honesty: Reward team members who identify “uncomfortable” risks early in the cycle.

- Use Empirical Evidence: Replace “gut feelings” with field data, stress test results, and user feedback logs.

- Appoint a Devil’s Advocate: In every risk review, assign one person the specific task of challenging every “Low Risk” assumption.

- Cross-Functional Reviews: Involve marketing, sales, and customer support. They often see how a customer actually uses a product, which is frequently very different from how an engineer intended it to be used.

- Live Documentation: Risk analysis is not a “one and done” task. It should be updated after prototypes, after certification, and certainly after the first six months of market feedback.

By implementing these strategies, the regulatory process transforms from a bureaucratic hurdle into a genuine competitive advantage. Products become more reliable, recalls become rarer, and the brand’s reputation for safety becomes a tangible asset.

Integrating Research into Practical Safety Work

Current literature reviews emphasize that risk management is not a separate administrative task, but a core component of the design philosophy. The most successful organizations generate evidence to evaluate the effectiveness of their safety activities. They do this rather than relying on historical assumptions. This means that if you claim a specific protective circuit provides electrical safety, you should have the test data or the peer-reviewed evidence to back that claim up. Moving from a “feeling-based” system to a more reliable approach is crucial. Adopting an “evidence-based” system effectively neutralizes the biases we have discussed.

Furthermore, these studies highlight that the effectiveness of risk management is directly tied to how well these activities support decision-making. If your risk document sits in a folder and never influences the design of the PCB or the choice of a component, it has failed its primary purpose. True compliance occurs when the risk assessment forces a change in the product. This change proves that the team prioritized user safety over maintaining the ease of the status quo.

Final Thoughts: Documents as Bias Thermometers

Ultimately, a high-quality risk analysis reflects what a product truly is, rather than what we wish it to be. If our compliance documentation is skewed by pressure and perception, we aren’t just filling out paperwork incorrectly; we are exposing users and the business to avoidable harm. Let your documentation serve as a mirror—if it looks too perfect, it’s time to look a little closer.

To read more about risk analysis take a look on the risk analysis strategies article